Table of contents

- A Shift in the Hiring Paradigm

- The Dual Nature of AI

- 1. Can ambitions meet algorithms to create a new era of AI-based hiring?

- 2. How does bias get coded into AI recruitment systems?

- 3. Why should fairness come first in AI-driven hiring?

- 4. Where does hidden bias often creep into recruitment processes?

- 5. How can organizations detect and address bias early in AI hiring?

- 6. What strategies can build firewalls against bias in recruitment?

- 7. Can diversity in hiring be achieved by design rather than chance?

- 8. How can predictive analytics forecast talent success without prejudice?

- 9. Why is trust the most valuable currency in AI recruitment?

- 10. What steps can organizations take to engineer bias-resistant hiring systems?

- 11. What role do recruiters play as the human shield for AI fairness?

- 12. How can organizations lead the revolution toward bias-free recruitment?

- 13. Why is candidate experience the first touchpoint of fairness in hiring?

- 14. How do global and legal perspectives shape bias-free hiring practices?

- 15. What does the future hold for managing bias in AI recruitment?

- Conclusion

- FAQs

A Shift in the Hiring Paradigm

Hiring used to be messy, instinct-driven, and slow. A hiring manager’s gut, a referral from a trusted colleague, a memorable interview- these small, human signals decided careers. Today, the scene looks very different. Employers rely on AI-powered recruitment platforms to process thousands of applications in minutes, surface likely fits, and produce measurable signals about candidates’ job fitness. Models are only as blind as the data that trains them. If your historical hiring data reflects decades of favouritism, geographic bias, or narrow hiring practices, then the “objective” score an AI gives can end up encoding the same old problems at scale. In plain terms: a biased past can become an automated future.

This is where the big idea lies: AI should not be a replacement for fairness. It must be its amplifier, but in the right direction. Technology can speed decision-making, but honest, human-centred guardrails must ensure those decisions are justified. That balance: speed with fairness is the new HR frontier.

The Dual Nature of AI

Artificial intelligence can be both an equalizer and an amplifier. On one hand, it removes human subjectivity and ensures consistency; on the other, if built on biased inputs, it mirrors inequality faster than any recruiter could. Bias does not always come from malice; sometimes it is buried deep in datasets shaped by decades of hiring trends. The outcome can be exclusionary systems that reward familiarity over capability.

Therefore, the goal is not to reject AI but to balance automation with accountability. Human oversight, ethical audits, and transparent algorithms ensure that recruitment stays inclusive and grounded in fairness. This partnership between data and empathy is what defines the future of hiring.

1. Can ambitions meet algorithms to create a new era of AI-based hiring?

Bridging Data and Dreams

AI systems are brilliant at pattern recognition: which skills correlate with successful performance, which career paths predict promotions, and which signals hint at long-term retention. But ambition, the spark that pushes people to move beyond what’s on paper rather is tricky. Ambition shows up as lateral career moves, project curiosity, unusual side hustles, or odd but meaningful indicators that a resume scanner might ignore.

ValueMatrix purposefully builds models that do not treat ambition as noise. Instead, they fold in signals such as cross-disciplinary projects, entrepreneurial stints, and role transitions that show learning agility. The result? Candidates who might have otherwise been filtered out get a fair hearing.

Recognizing Real-World Achievers

Consider a developer who taught herself data science in the evenings and shipped two side-projects that solved real user problems. Traditional filters, alma mater, years at big-name firms, could easily miss her. ValueMatrix’s system assigns tangible weight to outcomes: projects delivered, complexity handled, and demonstrated problem-solving. These are measurable, and they matter.

Outcome for Recruiters

For recruiting teams, this approach widens the funnel in meaningful ways. You get lists that include non-linear careers and people with unique strengths, and not just those who fit a historical mould. That leads to better hires and more resilient teams.

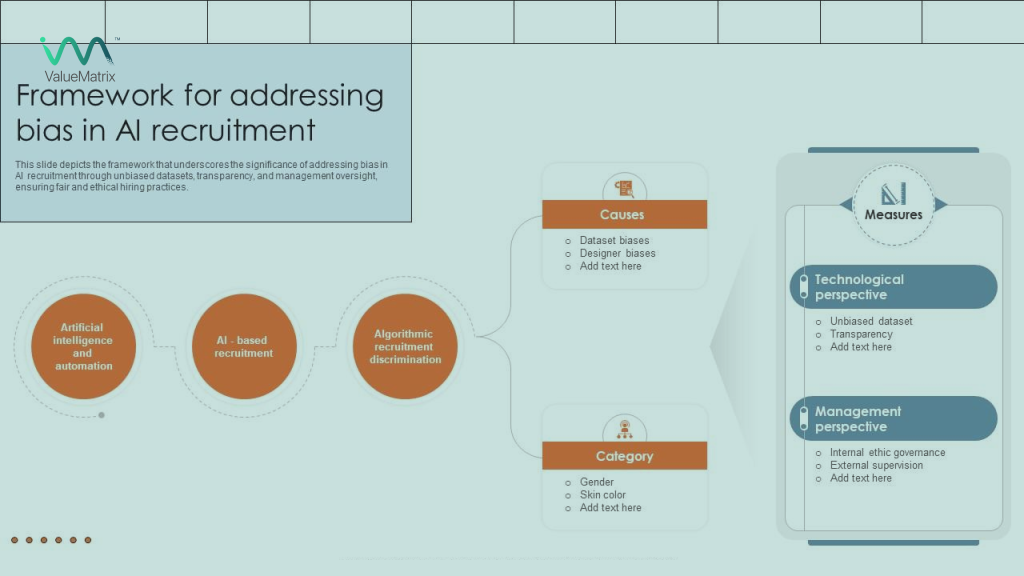

2. How does bias get coded into AI recruitment systems?

Bias by Design or by Data

It is tempting to point fingers: “Someone designed bias into the model.” But the reality is often less malicious and more structural. Bias creeps in through training data and organizational practices. If a firm historically hired heavily from two institutions, future models will over-index on similar credentials. The code did not start with prejudice, but it inherited patterns that reflect human bias.

Invisible Patterns, Visible Damage

The danger is cumulative. Small skews like more hires from one gender, one region, or one career track get baked into automated recommendations. Over time, this narrows pipelines, reduces diversity, and worsens outcomes like retention and innovation. Bias is not hypothetical; in turn, it causes real workplace homogeneity and missed opportunities.

ValueMatrix’s Remedy

ValueMatrix takes several practical steps: diversifying training datasets, simulating hiring scenarios that challenge defaults, and running fairness tests that reveal systemic skews. The system actively penalizes over-reliance on pedigree markers and rewards real-world outcomes. It is not magic; it is a process, repeated and audited.

3. Why should fairness come first in AI-driven hiring?

Fairness as the Foundation

Fairness should not be an afterthought bolted on at the demo stage. For ValueMatrix, fairness is architecture. Practically, that means three simple principles: transparency, traceability, and continuous improvement.

Transparency as Trust Currency

When a hiring team sees why a candidate scored the way they did and the features that drove the ranking it builds trust. Candidates deserve the same clarity. Explainability converts a black box into a glass box. When both sides can inspect the “why,” adoption becomes easier and complaints reduce.

Efficiency Meets Equity

There is a myth that fairness slows things down. In fact, fixing bias early prevents downstream churn and costly mis-hires. By embedding fairness into algorithms, ValueMatrix makes speed and equity mutually reinforcing.

4. Where does hidden bias often creep into recruitment processes?

Legacy Data and Overweight Pedigree

The usual suspects: old resumes, referral-heavy hiring, and filters that privilege famous logos. These create a false shorthand: prestige equals quality. But a candidate’s university or company label is only a proxy, which often is an unreliable one.

Overfitting to Historical Signals

Many systems overfit to what has worked in the past. That is neat as per data, but socially narrow. Without intervention, the model keeps selecting for a past that the business may have outgrown.

ValueMatrix’s Merit-First Framework

ValueMatrix instead scores “what was achieved” over “where it was achieved.” Completed projects, complexity of impact, and evidence of initiative become first-class inputs. This shifts the focus from pedigree to performance and opens doors for high-potential candidates from non-traditional paths.

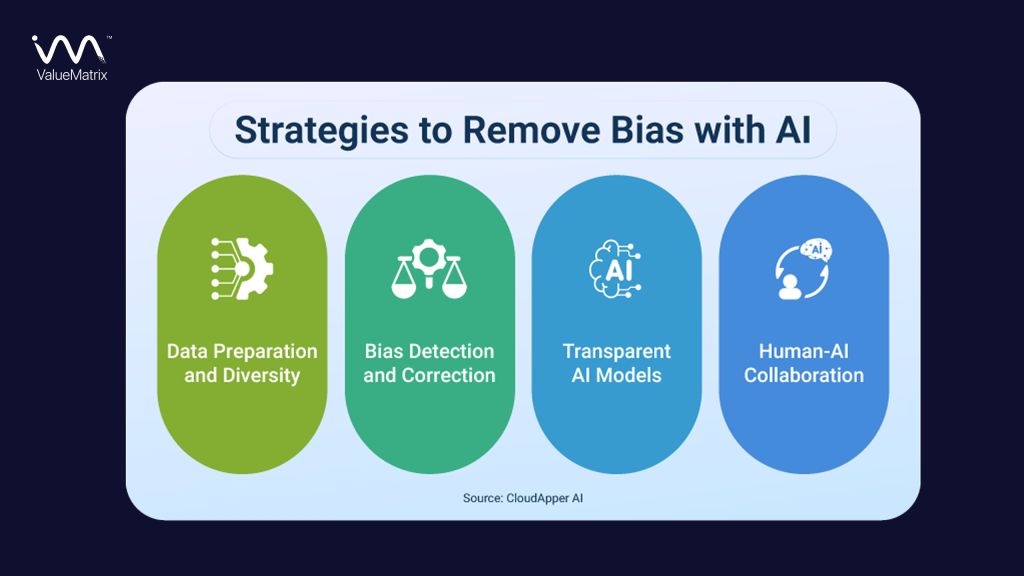

5. How can organizations detect and address bias early in AI hiring?

Early Detection Is Prevention

Good detection is proactive. Before models go live, they should be stress-tested with synthetic and historical datasets that reveal where bias could surface. Mock hiring rounds and scenario testing (e.g., “what if 60% of candidates come from one region?”) surface weak points before real people are affected.

Continuous Learning for Continuous Fairness

Bias is not static. As work and society change, so will the signals for success. ValueMatrix builds feedback loops: after each hire, the model checks how that hire actually performed, and adjusts weighting. This keeps the system adaptive.

Benefits for HR Leaders

What does this look like in practice? Recruiters sleep better. They have measurable dashboards pointing to where the model can improve. And crucially, they can show evidence to leadership and regulators that the system does not simply reproduce the past.

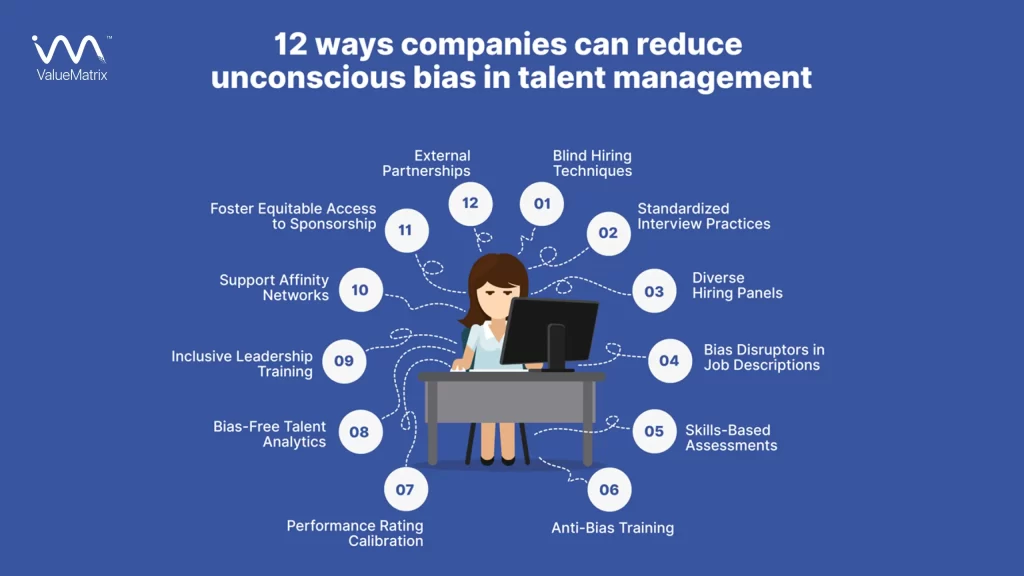

6. What strategies can build firewalls against bias in recruitment?

Blind Screening: Let Skills Speak Louder Than Labels

Real fairness starts in system design. Do not treat audits as a cleanup step. Embed guardrails in data collection, feature selection, and model training. ValueMatrix enforces rules such as limiting weight for demographic proxies and prioritizing outcome-based signals. The algorithm evaluates candidates purely on skill, experience, and compatibility with job criteria. In practice, this means two identical résumés, one from “John Smith” and one from “Jamal Ahmed” will be judged solely on merit, not name recognition.

Explainable AI: Transparency Builds Trust

A sandbox lets teams trial models on masked data and simulate hiring outcomes. Here, you deliberately expose models to edge cases to observe behaviour. Does the model still behave when candidate names, schools, and locations are anonymized? If not, adjustments are needed. ValueMatrix provides full visibility into its ranking process, revealing why a candidate was shortlisted or filtered out. Instead of functioning as a mysterious black box, its explainable AI model makes every decision traceable.

Ethical Algorithms: Fairness by Design, Not by Chance

Over time, preventive design means fewer surprises, better candidate experiences, and demonstrable metrics showing the organization is acting responsibly and not just saying it.

7. Can diversity in hiring be achieved by design rather than chance?

Intentional Inclusion

If you want diverse teams, you must build the process to produce them. ValueMatrix weaves DEI targets into sourcing and shortlisting layers so candidates from underrepresented backgrounds get visibility early.

Metrics That Matter

Dashboards show representation across stages: who applies, who gets interviewed, and who receives offers. When a drop happens at any stage, teams can diagnose and fix the leak.

Long-Term Payoff

Diverse teams are more creative and perform better commercially. This is not philanthropy; it is a productivity strategy. Building inclusion into the model increases business value and lowers hiring risk.

8. How can predictive analytics forecast talent success without prejudice?

Forecasting Without Stereotyping

Predictive models should rely on predictors that genuinely correlate with job success: task performance samples, practical assignments, and behaviour-based scoring. Historical proxies, alma mater, hometown, or gender must be de-emphasized.

Balancing Accuracy with Fairness

There will be trade-offs. Sometimes a feature improves short-term accuracy but hurts fairness. ValueMatrix provides decision-makers with that trade-off information so HR can choose a balance that matches company values.

Empowering HR Through Insight

When HR has access to transparent performance forecasts and understands their limitations, they can pair machine insight with human context to make balanced decisions.

9. Why is trust the most valuable currency in AI recruitment?

Transparency Creates Confidence

If you cannot explain a score, you cannot defend a hire. ValueMatrix generates explainable outputs that HR can present internally and, where appropriate, explain to candidates. This reduces suspicion and supports compliance.

Candidate Trust, Too

Candidates respond better to processes they understand. If a platform tells an applicant why they scored a certain way and what they could improve, the experience becomes constructive rather than alienating.

Impact on Brand

Companies that use transparent systems get better employer branding. Candidates tell their networks about the fair treatment, boosting attraction without extra ad spend.

10. What steps can organizations take to engineer bias-resistant hiring systems?

Tech That Self-Corrects

Continuous monitoring is non-negotiable. ValueMatrix deploys real-time checks that flag drift and bias indicators. When anomalies appear, the system escalates for human review.

Collaborative Oversight

Bias management is not just the data team’s job. Engineers, HR leads, legal counsel, and ethics advisors must co-own the cycle. That cross-functional oversight prevents blind spots.

Benefit

The net effect is resilience: systems that adapt to data and cultural shifts while maintaining fairness as a core outcome.

11. What role do recruiters play as the human shield for AI fairness?

Empathy Meets Evidence

AI brings signal; humans provide context. Final hiring decisions belong to people who can interpret nuances like career breaks, cultural context, or pockets of untapped potential that a model can miss. Recruiters serve as the ethical gatekeepers, validating machine-generated recommendations and ensuring that decisions align with human values

Empathy in Analytics Partnership Between Human and Machine

ValueMatrix treats recruiters not as end-users but as collaborators. It provides detailed reasoning behind each candidate match so that hiring managers can question, adjust, or refine recommendations. That habit turns raw scores into informed conversations rather than blind acceptance.

The Real Meaning of Human Oversight

In essence, human recruiters form the “bias firewall.” They interpret machine logic with moral judgment, context awareness, and lived experience. When AI suggests, humans decide. That synergy defines fairness in modern hiring.

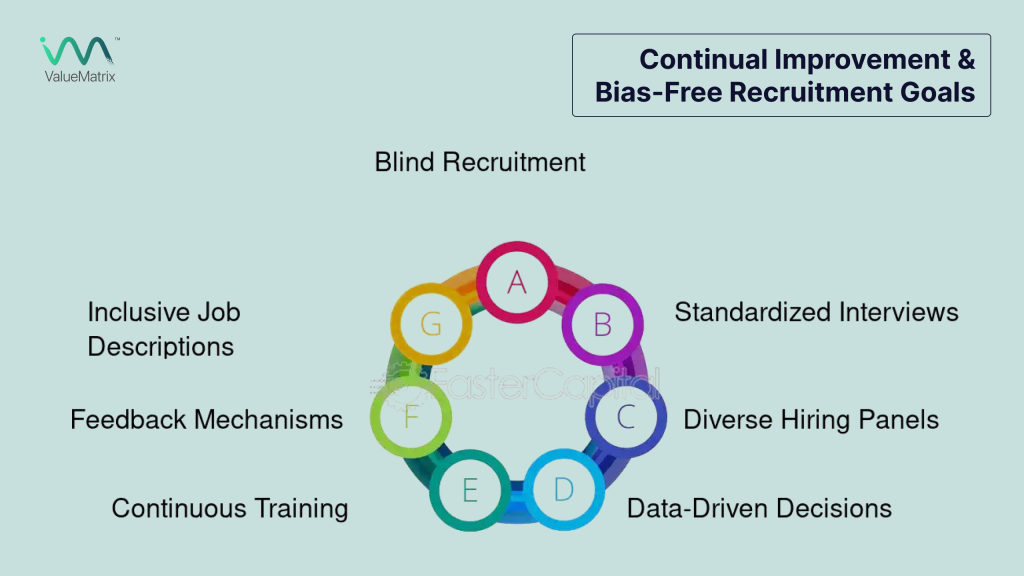

12. How can organizations lead the revolution toward bias-free recruitment?

Bias-Free Hiring: From Policy to Practice

ValueMatrix does not sell features; it sells a fairness workflow. From data sourcing policies to model governance, the platform preserves fairness as an engineering practice, not as a marketing slogan. ValueMatrix helps companies translate DEI ambitions into measurable recruitment outcomes.

Tangible Impact of Fairness-First Systems

Clients get more than software: they get audit frameworks, training, and ongoing strategic counsel. That partnership model ensures the technical and human parts of hiring evolve together. The system’s data-backed reporting makes fairness measurable, enabling HR leaders to show diversity growth backed by evidence.

From Early Adopters to Industry Leaders

The true leaders of tomorrow are those adopting ethical AI today. Companies working with ValueMatrix report better candidate satisfaction, improved diversity metrics, and stronger retention with measurable wins that justify the investment.

13. Why is candidate experience the first touchpoint of fairness in hiring?

Perception Shapes Experience

A candidate’s experience begins the moment they see a job post. Is the language inclusive? Is the application process transparent and reasonable? ValueMatrix helps companies audit and fix candidate-facing touchpoints. ValueMatrix enhances transparency by communicating each step clearly, so candidates understand how they are being assessed.

Respect in Every Interaction

The platform values both credentials and capability, thereby making candidates feel seen beyond their résumés. This recognition encourages engagement and trust, strengthening employer branding in the process.

Fairness as Brand Equity

A respectful candidate journey elevates employer brand and reduces dropout rates during the hiring funnel. That is how fairness transforms into brand equity.

14. How do global and legal perspectives shape bias-free hiring practices?

Fairness Knows No Borders

A patchwork of legal frameworks is shaping how recruitment AI must behave. Non-compliance is not just unlawful, but it is reputational damage. ValueMatrix’s architecture is designed with global compliance in mind, aligning with GDPR (Europe), EEOC (U.S.), and CCPA (California) frameworks.

Compliance as a Confidence Multiplier

The platform includes compliance checks that map local legal requirements to model behaviour. This reduces legal risk and simplifies cross-border hiring.

The Future of Global Ethics in AI Hiring

When legal and ethics boxes are checked programmatically, senior leaders can scale hiring without fearing hidden liabilities to unify fairness across regions and turn compliance into a competitive advantage.

15. What does the future hold for managing bias in AI recruitment?

From Correction to Prevention

The future is not reactive audits; it is predictive fairness. Models will simulate social impact before deployment, identifying bias signals and guiding design changes proactively.

Continuous Learning, Continuous Fairness

The system learns from every hiring cycle, constantly improving its understanding of what is “fair.” Expect tighter feedback loops where recruiter inputs and candidate outcomes immediately refine model logic. This fluid, iterative governance will make fairness adaptive rather than static.

Ethical AI as the New Standard

The future of recruitment will belong to companies that merge accountability with automation. By prioritizing human-centric AI, ValueMatrix ensures that innovation never comes at the cost of integrity.

Conclusion

Bias in AI hiring is not a failing of the toolset; it is a leadership problem. Companies that treat fairness as strategic and not incidental will reap the benefits in the form of better hires, a stronger culture, and a resilient reputation. ValueMatrix shows that with deliberate design, transparent practice, and constant human partnership, bias can be contained and hiring can finally reflect potential over pedigree.

FAQs

Absolute neutrality is unlikely, but bias can be driven to negligible levels through continuous audits and adaptive fairness mechanisms.

After every hiring cycle is ideal; at a minimum, schedule quarterly reviews aligned with hiring volumes and business cycles.

By offering explainability, clear feedback, and transparent assessment rubrics that candidates can view and act upon.

It combines explainable AI with process-driven human oversight and continual fairness testing — a governance-first approach.

Yes — modular APIs and connectors let ValueMatrix work with most ATS and HRMS platforms.

Through embedded policy engines that reference GDPR, EEOC, CCPA, and local regulations, flagged non-compliant flows trigger human review.

No — it reduces transactional load but elevates recruiter impact: more strategic, humane, and consultative.

Yes — by neutralizing proxies and amplifying outcome-based signals, the platform helps organizations build genuinely diverse teams.

By comparing hiring predictions to post-hire performance and retention metrics, the platform ties hiring quality to business outcomes.

Yes — from startups to global enterprises, its modular and audited architecture adapts to scale and complexity

About Us

ValueMatrix is an AI-powered talent intelligence platform that helps companies hire better, faster, and without bias. We go beyond resumes to assess skills, behavioral traits, and cultural fit using advanced AI and proven psychological frameworks. Our platform delivers data-driven insights that improve hiring accuracy, reduce time-to-hire, and elevate candidate quality.

ValueMatrix AI enables hiring teams to make confident hiring decisions and build high-performing teams at scale.