The Performance Improvement Plan (PIP) has traditionally been the dark room of corporate life, a place where employees feel trapped by subjective bias, and employers feel burdened by administrative risk. However, as Artificial Intelligence enters the room through AI in performance management, the nature of the PIP is shifting from a static document to a more data-driven ecosystem. Is this a blessing of fairness or a curse of cold surveillance tied to AI appraisal systems?

1. The Fear of Impersonal, Cold Evaluation

The Logic

Employees fear that a “robot boss” cannot see the “why” behind poor performance, such as a family crisis or a toxic teammate. This concern often arises when AI is introduced into performance management. However, the human alternative is often plagued by affinity bias, where managers subconsciously favor those similar to them, sometimes ignoring actual output, raising questions around AI fairness in the workplace versus human subjectivity.

Neutralizing Language Bias

Traditional reviews often rely on gendered or subjective language, for example, calling a woman “aggressive” while a man is described as “assertive.” Natural Language Processing tools used in AI appraisal systems can flag these biased phrases in real time, shifting focus to objective achievements like “met 90% of deadlines” instead of vague personality critiques. This supports more ethical automated employee evaluation based on consistent performance rather than a manager’s mood.

Replacing Mood with Metrics

Human managers are susceptible to recency bias, where a mistake made yesterday outweighs months of solid work. AI mitigates this by analyzing long-term data streams through performance feedback AI tools, ensuring ]evaluations reflect overall consistency instead of short-term frustration. This emotional distance protects employees from being judged due to personal clashes or temporary morale dips.

The Baseline of Evidence

By using AI to create a skills score or performance baseline as part of an AI performance improvement plan, organizations move away from the manager lottery, where careers depend on having a strict or lenient boss. Instead, every employee is measured against a universal, evidence-based standard that remains stable, building trust.

Identifying Hidden Obstacles

While a manager may see a drop in output as laziness, AI can correlate that decline with factors like system lag or sudden ticket surges using employee monitoring AI responsibly. This allows evaluations to consider team dynamics or technical barriers that a human eye might miss, aligning with HR automation ethics.

A Shield for Introverted Talent

Traditional PIPs often favor those who speak the loudest or project confidence. AI-driven systems within AI performance improvement plan frameworks focus on output and quality, ensuring quieter yet highly productive employees are judged by delivered value rather than meeting-room performance.

Real-Life Scenario: IBM

IBM has used Watson AI to analyze employee skills and performance data. By moving away from purely subjective annual reviews toward data-backed insights, the company aims to strengthen AI PIP HR ethics, provide a more objective skills score, and reduce the friendship factor in performance discussions.

Reference: Are AI-driven performance evaluations truly fair? – People Matters

2. The Concern Over ‘Black Box’ Decisions

The Logic

A “Black Box” is a system where you see the result but don’t understand the logic behind it. If an AI says you are failing but cannot explain how it reached that conclusion, the system feels like a digital judge with no jury or room for dialogue. This fear becomes central when AI in performance management systems influences career outcomes.

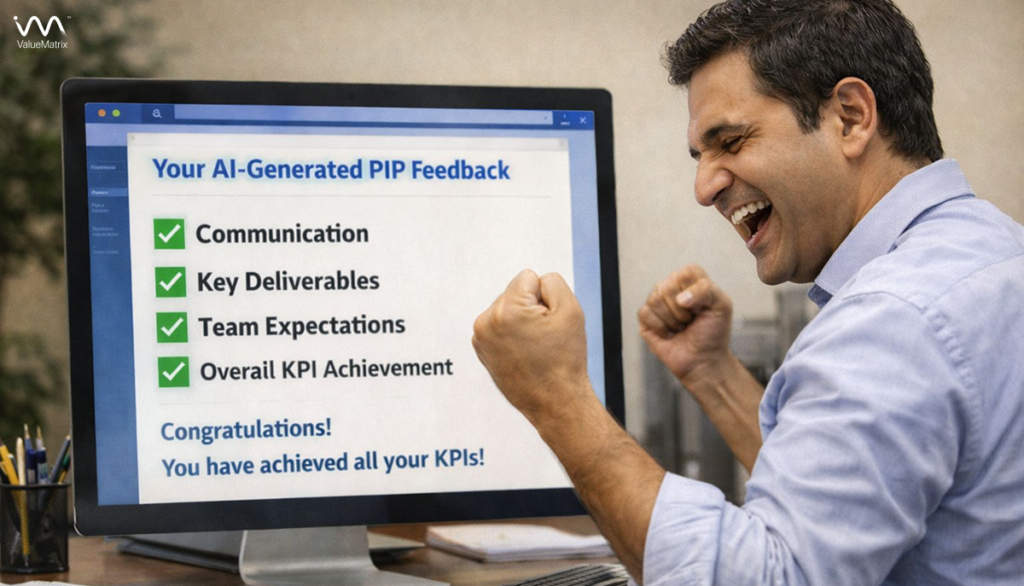

From Vague to Verifiable

Manual PIPs are notorious for vague instructions like “be more proactive.” AI pushes a shift toward numerical precision within an AI performance improvement plan. An AI-driven plan might state that an employee needs to reduce error rates by 15% across 200 units. While the math behind it is complex, the goal itself is clear, measurable, and gives the employee a visible finish line to work toward confidently.

Transparency through Explainability

Modern AI tools are moving toward Explainable AI, which provides a clear rationale for its findings. Instead of a flat “fail,” systems used in AI appraisal systems highlight data points such as response times or peer feedback scores. This makes decisions easier to question and discuss with managers, encouraging open and honest conversations.

The Clarity of Alignment

AI can map individual goals directly to company-level objectives using performance feedback AI tools. This helps employees understand why certain targets feel demanding. The goal is not random, but connected to team success or business continuity, reducing the sense that the PIP is an arbitrary punishment.

Audit Trails for Fairness

Every AI decision leaves a digital trail, supporting AI fairness workplace standards. If a target feels unfair, employees can compare historical data from peers in similar roles through automated employee evaluation records to check whether expectations are reasonable. This transparency acts as a safety net against unrealistic or biased demands.

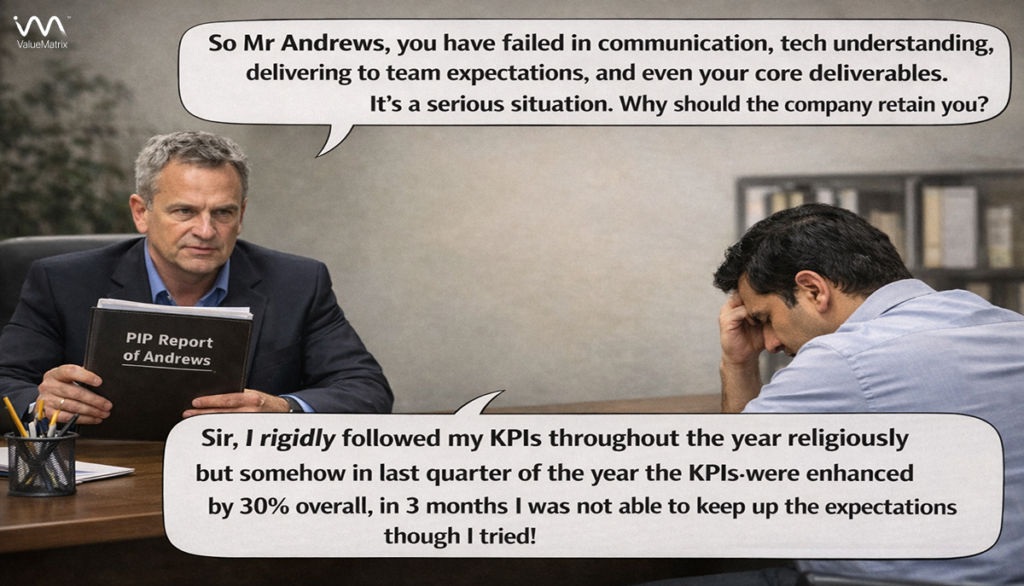

Reducing Goal-Post Shifting

A common fear is that PIP requirements will change midway. AI-generated PIPs lock criteria at the start, aligning with AI PIP HR ethics. Since targets are data-defined, managers cannot arbitrarily raise the bar, giving employees a stable and fair recovery path.

Real-Life Scenario: LivePerson

The tech company LivePerson uses AI-powered tools like Betterworks to help employees create meaningful, data-driven goals. This approach reinforces HR automation ethics by ensuring targets are not just steep, but grounded in real performance needs and business outcomes.

Reference: How Live Person Uses AI to Elevate Experience – Betterworks

3. The Worry About Constant Surveillance

The Logic

Constant tracking can make employees feel like they are being watched by a digital microscope, leading to surveillance fatigue. This concern is often linked to employee monitoring AI. However, when positioned as coaching rather than policing, the same data can support an AI performance improvement plan and even save a career.

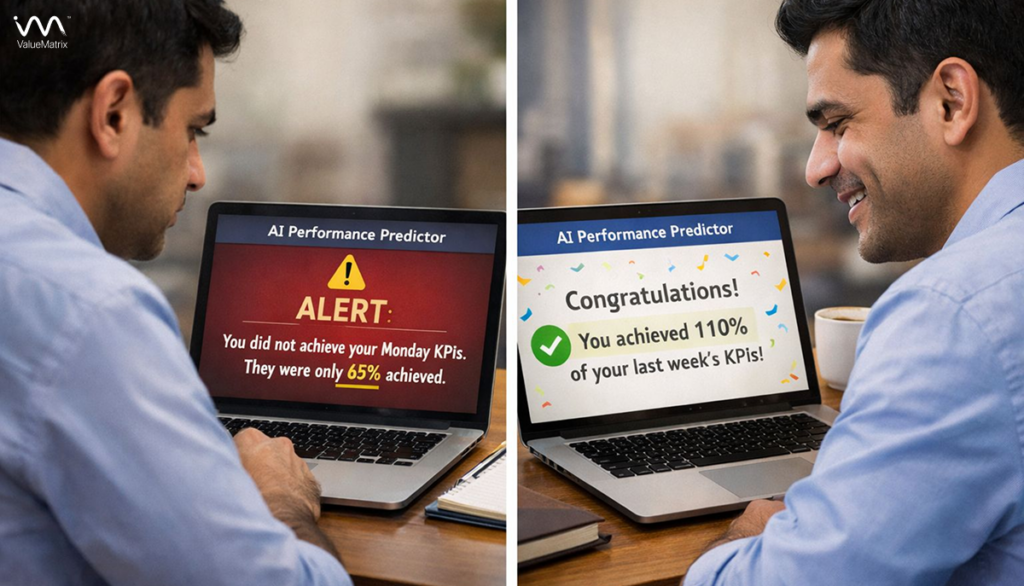

The Early Warning System

Manual reviews are reactive, where employees discover failure months later. In contrast, AI in performance management enables timely nudges. If a salesperson’s activity drops on Monday, the system can flag it on Tuesday, allowing a quick course correction before the issue escalates into a formal PIP. This early intervention often prevents unnecessary stress and protects long-term performance.

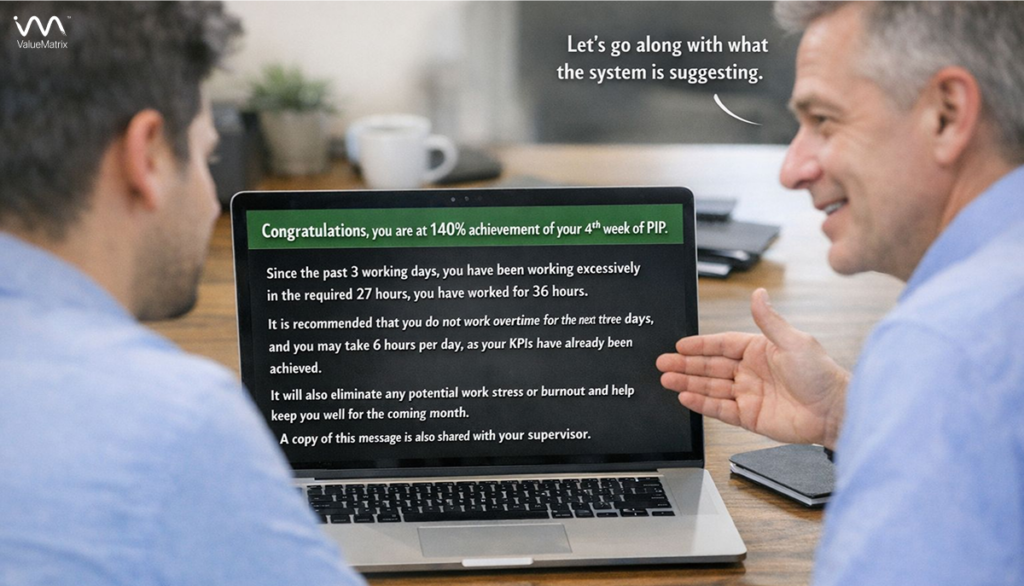

Identifying Burnout Before It Hits

Advanced monitoring tools can detect well-being signals such as excessive after-hours work. When aligned with HR automation ethics, this data is used to offer support rather than punishment. Smart organizations intervene during a rough personal phase, turning surveillance into a preventive mental health mechanism that stops performance decline before it begins.

Empowering Autonomy through Insights

When employees can access their own dashboards created through performance feedback AI tools, they can self-correct without managerial pressure. The AI acts as a private mirror, helping individuals regain control over their automated employee evaluation journey without public embarrassment or shame.

Reducing Performative Work

Traditional offices reward visibility over value, encouraging people to stay late just to look busy. AI focuses on real output instead of seat time, reinforcing AI appraisal systems that value results. Employees are freed from performative behavior and can concentrate on meaningful contributions.

Contextual Performance Analysis

AI evaluates not just volume but task difficulty. By applying complexity scoring, it distinguishes between slow work caused by struggle and slow work caused by handling the most difficult problems. This supports AI fairness workplace principles and ensures deep thinkers are not penalized for lack of speed.

Real-Life Scenario: Microsoft

Through Viva Insights, Microsoft analyzes patterns like meeting load and focus time. Rather than acting as a surveillance tool, it reflects personal productivity data back to employees. This approach supports ethical employee monitoring AI, helping individuals manage burnout and time effectively without direct managerial intervention.

Reference: Microsoft Viva Insights: Productivity or Surveillance?

4. Standardization and Legal Defensibility

The Logic

For employers, a PIP functions as a legal safeguard. For employees, it is about fairness and dignity. By embedding AI appraisal systems into evaluations, organizations ensure that the same ruler measures performance for everyone, regardless of geography, gender, or personal rapport. This consistency strengthens trust and makes AI in performance management feel predictable rather than arbitrary.

The Manager Lottery Cure

In large organizations, performance outcomes often depend on whether a manager is strict or lenient. An AI performance improvement plan removes this imbalance by applying universal benchmarks. Career outcomes are tied to evidence-based standards instead of managerial personality, improving long-term confidence in the system.

A Shield Against Litigation

When PIPs are supported by objective metrics rather than gut instinct, they become legally defensible. This aligns with HR automation ethics, reducing wrongful termination risks while ensuring that difficult decisions are grounded in transparent data instead of personal bias.

Uniformity in Global Teams

Cultural communication differences are frequently misread as performance gaps. AI applies consistent KPIs to employees across locations, supporting AI fairness workplace principles and preventing global talent from being unfairly penalized due to regional or cultural styles.

Accountability for Leaders

Standardization also evaluates managers themselves. If patterns show certain leaders repeatedly placing diverse or high-performing employees on PIPs, the data flags it. This introduces ethical oversight into AI in performance management, discouraging toxic leadership behaviors.

Consistency at Scale

As organizations grow, review quality often declines. Automated employee evaluation ensures that the thousandth PIP receives the same structured attention as the first, preserving quality and fairness even at high volume.

Real-Life Scenario: Amazon

Amazon uses standardized, data-driven performance metrics in its fulfillment centers. Automated tracking of Time Off Task creates uniform benchmarks across teams and supports consistent performance interventions, demonstrating how AI performance improvement plans scale fairness while maintaining legal defensibility.

Reference: Inside Amazon’s Automated Tracking Systems – The Verge

5. From Punishment to Personal Growth Roadmaps

The Logic:

Most PIPs still hand employees a generic “do better” checklist. AI performance improvement plans change this by turning the PIP into a kind of personal GPS. It maps a clear growth route based on what has actually worked for thousands of people who faced similar performance challenges, making the path forward feel realistic and achievable rather than intimidating.

Recognizing Non-Traditional Talent:

I can surface soft skills and traits that suggest a better role fit elsewhere. Instead of forcing termination, ethical AI in performance management may recommend redeployment, preserving talent and reinforcing HR automation ethics.

Precision Training Recommendations:

AI in performance management does not stop at identifying a skill gap. It guides employees to the exact training module, mentor, or stretch assignment that fits their learning style. This shifts the PIP from feeling like a reprimand to feeling like a genuine investment, supporting ethical AI appraisal systems and higher recovery rates.

Predictive Success Mapping

By analyzing patterns from employees who successfully improved, performance feedback AI tools can forecast which skills will matter most next. This helps employees move beyond survival mode and align development with future roles, quietly improving long-term relevance and AI fairness in the workplace.

Adaptive Learning in Real Time

When an employee struggles with part of the plan, automated employee evaluation systems can adjust resources or learning intensity instantly. This keeps the PIP flexible and supportive instead of rigid, reducing failure caused by one-size-fits-all expectations.

Recognizing Non-Traditional Talent

AI can surface soft skills and traits that suggest a better role fit elsewhere. Instead of forcing termination, ethical AI in performance management may recommend redeployment, preserving talent and reinforcing HR automation ethics.

Micro-Learning Integration

Rather than long training sessions, AI embeds short learning moments into daily work. These five-minute lessons reduce cognitive load and make improvement feel natural, aligning with responsible employee monitoring AI practices.

Real-Life Scenario: Workday

Workday uses AI-driven tools to deliver personalized development insights. By identifying skill gaps and suggesting internal stretch assignments, its system can transform a traditional PIP into a focused, individualized career development roadmap.

Reference: Using AI for Personalized and Bias-Free Performance – Workday

6. Focusing Human Effort on Coaching

The Logic:

Nearly 80% of a traditional PIP is spent on paperwork and documentation. AI in performance management takes over this operational load, freeing managers to focus on what only humans do best: empathy, active listening, and meaningful support during a difficult phase. This shift makes AI performance improvement plans feel more humane and effective.

From Record-Keeper to Coach:

Managers usually spend hours collecting examples to justify a PIP. Automated employee evaluation handles this documentation automatically. One-on-one time then shifts toward coaching, removing obstacles, and guiding employees forward, slowly turning a tense process into a collaborative improvement effort.

Reducing “The Judge” Tension:

Being both judge and coach puts managers in an uncomfortable role. When AI appraisal systems manage objective records, managers can stay aligned with the employee. This separation lowers stress, improves trust, and supports AI fairness in the workplace during sensitive conversations.

Enhancing Communication Quality:

AI-powered performance feedback AI tools can surface sentiment insights or suggest constructive discussion prompts. This keeps conversations focused on solutions rather than complaints, making PIP meetings feel like supportive check-ins instead of interrogations.

Real-Time Support for Managers:

Not every manager is a natural coach. Ethical HR automation tools can guide managers on how to frame feedback clearly and compassionately, helping them motivate rather than demoralize employees and reinforcing HR automation ethics at scale.

Eliminating the “Surprise” Factor:

Traditional PIPs often feel sudden and shocking. Continuous feedback through employee monitoring AI, when used responsibly, turns the PIP into a natural extension of ongoing dialogue, reducing anxiety and keeping focus on improvement instead of blame.

Real-Life Scenario: Betterworks

Organizations using Betterworks’ AI-powered Feedback Assist report a 50 to 75 percent reduction in review-writing time. That reclaimed time is redirected toward deeper coaching conversations, allowing managers to act as mentors rather than paperwork administrators.

Reference: The Pivotal Role of AI in Performance Management – Betterworks

7. How ValueMatrix.AI Empowers Organizations to Build Fairer PIPs

The Logic:

At ValueMatrix.AI, we believe the purpose of technology is not to replace human judgment but to sharpen it with consistency, context, and fairness. Our platform strengthens AI performance improvement plans by replacing gut feel with structured talent intelligence, making AI in performance management more explainable, balanced, and human-centered rather than cold or punitive.

Eliminating Implicit Bias:

ValueMatrix applies advanced algorithms aligned with psychological frameworks to filter unconscious bias from evaluations. By grounding decisions in AI fairness workplace principles, assessments focus on outcomes and behaviors instead of demographics, personal affinity, or subjective managerial preference, supporting ethical AI appraisal systems.

Holistic Multi-Criteria Evaluations:

We move beyond resumes and raw KPIs. Our AI assesses behavioral patterns, team dynamics, soft skills, and personality indicators to understand why performance gaps exist. Often, the issue is role mismatch rather than capability, and automated employee evaluation helps surface better internal role alignment.

Predictive Retention and Growth:

ValueMatrix links historical performance data with future skill requirements and upcoming project needs. This allows HR teams to intervene early with learning paths or mentoring, supporting AI PIP HR ethics, and preventing unnecessary PIPs before they are triggered.

Data-Driven Decision Support:

Managers receive clear, customizable reports that offer defensible evidence for decisions. This strengthens compliance while giving employees transparent expectations, reducing anxiety around employee monitoring AI, and reinforcing trust through responsible HR automation ethics.

Explore more from our team:

- Can AI Improve Candidate & Employee Experience? – How objective AI leveling prevents the “manager lottery.”

- AI Talent Intelligence: Data-Driven Transformation – Understand the science behind personalized development tracks.

- Make Sure Inclusivity is part of Your AI Implementation Plan – Why ethics must be the soul of your HR technology.

Conclusion: The Future Is a Hybrid Balance

AI-generated PIPs are neither a complete blessing nor an outright curse. Their real value emerges when thoughtfully designed, with careful governance, ethical oversight, and human judgment guiding their application. By integrating AI in performance management, organizations can replace subjective and inconsistent evaluations with AI appraisal systems that promote fairness and transparency, while also offering AI performance improvement plans tailored to each employee’s growth trajectory.

Throughout this article, we’ve seen how AI can directly address the deepest anxieties employees carry around performance reviews. It reduces fears of opaque decisions and black box evaluations, delivering measurable clarity, objective benchmarks, and actionable feedback. By shifting focus from surveillance to coaching supported by AI insights, employees receive clearer expectations and personalized pathways for improvement, while managers spend less time on administrative burdens and more time providing empathy and context, aligning with AI PIP HR ethics principles.

For employers, AI ensures standardization, scalability, and legal defensibility while fostering employee monitoring AI systems that are ethically applied. For employees, it offers confidence, constructive feedback, and a manager who can act as a true coach. By combining HR automation ethics with automated employee evaluation, organizations promote fairness, consistency, and a culture where high performance is recognized and nurtured.

The future of performance management lies in a hybrid model. AI appraisal systems handle data, structure, and insight, while humans bring emotional intelligence, judgment, and guidance. When these forces work in harmony, AI in performance management transforms the PIP into what it was always meant to be: not a looming threat but a transparent, supportive, and growth-oriented journey. This model demonstrates AI fairness in the workplace, reinforces performance feedback AI tools, and ensures that technology strengthens the human connection rather than replacing it.

FAQs

It is a PIP that uses data and analytics to define goals, track progress, and reduce subjectivity.

AI standardizes evaluations and minimizes bias caused by mood, favoritism, or recency effects.

When designed with explainability, they provide clear metrics and traceable decision logic.

It can, but when used responsibly, it functions more as early coaching than constant surveillance.

AI enables personalized learning paths, adaptive feedback, and targeted skill recommendations.

Key concerns include bias in data, lack of transparency, and misuse of monitoring tools.

Yes. Data-backed, consistent evaluations improve documentation and defensibility.

Managers should focus on empathy, context, and coaching while AI handles structure and data.